Agentic Analytics Are Here: Why Your AI Agents Still Can't Answer Real Business Questions

Google Trends tells the story: search interest in 'agentic AI' went from near-zero to breakout status in under twelve months. But here's the uncomfortable reality: your AI agents can't answer real business questions. They can write emails and summarize meetings, but they can't tell you which customer segment is churning or how Q4 margins compare to Q3. The problem isn't intelligence. It's plumbing. SparteraConnect fixes this with discrete, pre-built analytics objects served through managed MCP servers, not fragile NL2SQL. Full usage lineage, insight caching, and dual-mode deployment for both internal intelligence and external monetization.

The Year Every Company Wanted AI Agents — And None of Them Could Do Math

Google Trends tells the story: search interest in 'agentic AI' went from near-zero to breakout status in under twelve months. Every enterprise software vendor now claims to support AI agents. Every analyst deck has a slide about autonomous workflows. Every CTO has a roadmap that includes agents doing something.

But here's the uncomfortable reality most of those roadmaps don't address: your AI agents can't answer real business questions.

Ask an agent, 'What drove our revenue growth last quarter?' and you'll get one of two responses: a vague summary pulled from cached documents, or a polite deflection suggesting you check your BI dashboard. The agent can write your emails, summarize your meeting notes, and draft your performance reviews. It cannot tell you which customer segment is churning, what your inventory forecast looks like, or how your Q4 margins compare to Q3.

That's not an intelligence problem. It's a plumbing problem. Your structured data (the metrics, KPIs, trends, forecasts, and predictions living in your data warehouses, relational databases, and ML models) has no pathway to your agents. And the approaches the industry is converging on to fix this are, frankly, fragile.

The Agentic Analytics Gap

The NL2SQL Trap: Why 'Just Let AI Write SQL' Doesn't Work in Production

The most popular approach to connecting AI to structured data is Natural Language to SQL (NL2SQL): let the AI interpret a question, generate a SQL query on the fly, execute it against a database, and return the results.

In demos, it's magical. In production, it's a liability.

The academic community has been candid about this. Researchers from Microsoft published a paper with the blunt title 'NL2SQL is a solved problem... Not!' — documenting how enterprise-grade NL2SQL remains far from reliable despite years of progress. The best models plateau around 85% accuracy on carefully curated benchmark datasets like Spider. In real enterprise environments with messy schemas, ambiguous terminology, and complex joins, accuracy drops significantly. When researchers tested models with simple paraphrased versions of the same questions, accuracy fell by 20 percentage points on some models.

The failure modes aren't subtle. NL2SQL systems routinely confuse comparison operators, drop filtering conditions, hallucinate JOIN paths, and, most dangerously, generate plausible-looking SQL that executes successfully but returns the wrong answer. A January 2026 research paper found that state-of-the-art systems tend to force answers rather than abstaining, producing 'plausible but semantically incorrect SQL' when they should be asking for clarification. The system confidently gives you wrong numbers. And you often have no way of knowing.

The Semantic Layer Tax

Some analytics vendors have invested heavily in making NL2SQL more reliable. ThoughtSpot's approach, for example, uses LLMs only for intent understanding, then translates natural language into proprietary 'search tokens' that generate deterministic SQL. They claim this eliminates hallucinations because the SQL generation step itself is predictable once the tokens are resolved.

It's a meaningful architectural improvement over raw NL2SQL. But it comes with a significant cost: you have to build and maintain a comprehensive semantic layer first. That means modeling your data in ThoughtSpot's worksheet format, defining business glossaries, establishing synonym mappings, and continuously coaching the system with human-in-the-loop feedback. Their own documentation describes a process where 'analysts can curate feedback and provide model instructions' so the system improves over time.

That's not a 60-second setup. That's a multi-month data engineering project. Before a single business user asks their first question. And at $1,250+ per month before you've written your first query, the total cost of ownership quickly reaches six figures annually.

Even after all that investment, you're still generating SQL at runtime. Every question produces a novel query against your database. The system is exploratory by design, optimized for ad-hoc human Q&A, not for the deterministic, repeatable, auditable consumption patterns that AI agents require.

The Bigger Problem: Who's Watching?

Here's what nobody talks about: when NL2SQL generates a query, who validates it? When a dashboard gets passed around as a PDF or an agent generates an ad-hoc answer from dynamically constructed SQL, there is no audit trail connecting the specific question, the specific parameters, and the specific user or agent that initiated the request.

You can't trace what happened. You can't reproduce the exact query. You can't tell your compliance team precisely what data was accessed, by whom, with what parameters, at what time. In regulated industries, this isn't a nice-to-have — it's a dealbreaker.

The Audit Trail Problem

• Dashboards & PDFs: No record of who consumed what insight, when

• NL2SQL: Novel SQL generated at runtime: unreproducible, unauditable

• Excel files: Emailed, copied, modified. Zero lineage

When your CFO asks 'Where did that revenue figure come from?' You need a better answer than 'the AI wrote some SQL.'

A Different Architecture: Discrete Analytics, Not Dynamic Queries

SparteraConnect takes a fundamentally different approach. Instead of generating SQL on the fly and hoping the AI interprets your question correctly, every analytic is a discrete, pre-built object that has been designed, tested, and approved before any agent ever calls it.

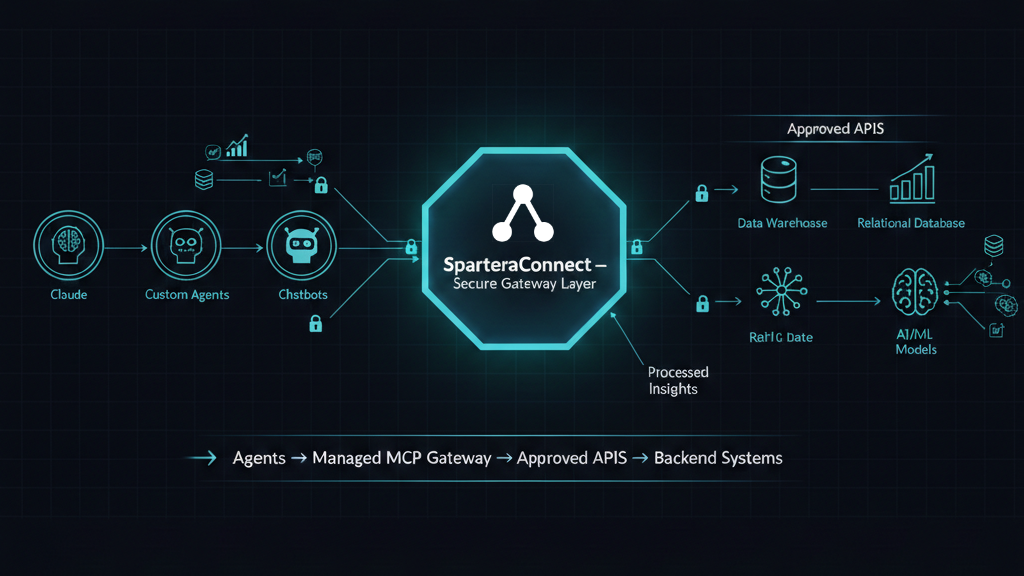

The SparteraConnect Architecture

internal & third-party

Cache · Lineage · Security

parameterized analytics

AI/ML Models

Only processed insights flow back to agents. Raw data never moves.

Each step in that chain is intentional, secured, and auditable.

Five Capabilities That Change the Game

1. Connect to Your Data — Wherever It Lives

SparteraConnect supports 13+ data platforms across every major cloud provider and database ecosystem:

| Cloud Provider | Supported Platforms |

|---|---|

| Amazon Web Services | Redshift, Redshift Serverless, Aurora (MySQL, PostgreSQL, Serverless), RDS MySQL |

| Google Cloud Platform | BigQuery, Cloud SQL (MySQL, PostgreSQL) |

| Multi-Cloud | Snowflake, Teradata Vantage |

| Databases | Microsoft SQL Server, Supabase (PostgreSQL, Analytics) |

| External | Any REST API endpoint via External API Integration |

Setup is straightforward: provide your connection credentials, and Spartera establishes a secure, read-only link. Your data never moves. All connections are encrypted, credential-secured, and network-isolated. Full details in our data sources documentation.

2. Create Insights with Point-and-Click — Not Code

This is the core differentiator. Instead of asking an AI to write SQL at runtime, you use Spartera's UI to create each analytic as a discrete, parameterized object. Think of each analytic as a pre-built, tested, and approved function that agents can call, not an open-ended query interface.

Each analytic can be parameterized for customization. A single 'Revenue by Region' analytic can accept parameters like region, date_range, and comparison_period, scaling one insight to answer dozens of variations of the same business question. The agent calls a known function with specific parameters and gets a deterministic, validated result every time.

No runtime SQL generation. No hallucinated JOINs. No ambiguous intent parsing. The analytics team designs and tests the insight once; agents consume it reliably at scale.

3. Cache, Schedule, and Optimize

Not every analytic needs to hit the database in real time. SparteraConnect provides insight-level caching and scheduling for analytics that involve long-running queries or produce results that don't change minute-to-minute.

Run your quarterly revenue roll-up on a schedule and cache the result. Let agents consume the cached insight in milliseconds rather than waiting for a 30-second warehouse query. When the data refreshes, the cache updates automatically.

This is especially valuable for expensive analytical queries: the kind that would cost real money in compute every time an NL2SQL system decides to run them ad hoc.

4. Full Usage Lineage: Who Called What, When, With What Parameters

Complete Audit Trail — Every Request, Every Time

• Who (or what) initiated the request: specific user, agent, or system

• Which analytic was called: the discrete insight object, not a blob of SQL

• What parameters were passed: exact customization applied to this call

• When the request was made: precise datetime stamping

This isn't session-level logging. It's analytic-level, parameter-level, timestamped lineage: a complete audit trail of every insight consumed through your MCP server.

You know exactly what every agent accessed, how they customized it, and when. Compare that to the alternative: an NL2SQL system where an agent generates a novel SQL query, executes it, gets a result, and nobody records the specific logic that produced that specific number.

5. Managed MCP Server — No DevOps Required

SparteraConnect deploys as a fully managed MCP server. You don't manage infrastructure, patch security vulnerabilities, handle protocol updates, or worry about scaling. We handle all of it, including staying current with the latest MCP specification as the protocol evolves.

Pricing is simple: $100/month for the managed server plus usage-based API calls. No opaque enterprise negotiations. No six-figure contracts. No surprise compute bills.

Two Modes: Internal Intelligence and External Monetization

Most analytics platforms force you to pick a lane. SparteraConnect serves both, from the same infrastructure.

Internal: Chat With Your Analytics

Deploy a SparteraConnect MCP server for your organization and your team gets conversational access to curated analytics.

Product managers ask about adoption metrics. Sales directors query pipeline forecasts. Executives get margin analysis. Nobody writes SQL. Nobody waits three days for an analyst to pull a report.

Agent-to-agent orchestration: Your planning agent calls your forecasting analytics. Your customer success agent pulls churn predictions. Your operations agent queries inventory models. Each call hits a tested, parameterized analytic, not a dynamically generated query.

External: Monetize Your Intelligence

Deploy an MCP server with analytics you want to sell, and third-party agents can discover, query, and pay for your insights, with no data transfer and no model transfer.

Your IP stays protected. Agents send parameters, receive results. The underlying data never moves. The underlying queries are never exposed. You set per-query pricing. Spartera handles billing and pays you via Stripe.

Examples: A weather analytics company monetizes predictive forecasts to retail agents. A financial services firm sells risk scoring to lending agents. A sports analytics provider distributes performance predictions to media platforms.

McKinsey and Bain both published major reports in 2025-2026 validating exactly this model. Bain specifically called out MCPs as enabling 'just-in-time' data monetization where agents pay per insight, activating data only when it supports a live decision. SparteraConnect is the platform that makes that thesis operational.

How SparteraConnect Compares to Analytics Companies in the MCP Space

The managed MCP space is getting crowded fast. Here's how SparteraConnect differs from the analytics companies building in this space:

| Capability | SparteraConnect | CData Connect AI | ThoughtSpot / Qlik / Oracle |

|---|---|---|---|

| Core Approach | Curated, parameterized analytics objects | Data connectivity: agents query via SQL layer | NL2SQL with semantic layer / proprietary tokens |

| Runtime SQL Generation | None: pre-built & tested | Yes: ad-hoc at runtime | Yes: via tokens or Logical SQL |

| Insight Caching & Scheduling | Per-analytic level | Basic caching | Platform-level only |

| Usage Lineage | User → Analytic → Parameters → Datetime | Basic audit logging | Session-level |

| External Monetization | Built-in marketplace + Stripe payouts | None | None |

| Cross-Platform Data Sources | 13+ (AWS, GCP, Snowflake, SQL Server, etc.) | 300+ SaaS connectors | Own platform only |

| Setup Complexity | Point-and-click, 60 seconds | Point-and-click connectors | Multi-month semantic layer buildout |

| Starting Price | $100/mo + usage | Enterprise pricing (opaque) | $1,250+/mo (ThoughtSpot) |

The key distinction: CData gives agents a pipe to your database. Excellent for connectivity, but agents still generate queries at runtime with no curation layer. ThoughtSpot, Qlik, and Oracle bolt MCP onto their existing BI platforms. Designed for their own users, their own ecosystem, with no external monetization pathway.

SparteraConnect is the analytics distribution layer purpose-built for the agentic era: curated analytics objects with full lineage, served via managed MCP, for both internal intelligence and external monetization, across any data platform.

From Zero to Agentic Analytics in Four Steps

Connect Your Data

Point to your database or data warehouse. Secure, read-only connection. Supports BigQuery, Snowflake, Redshift, PostgreSQL, MySQL, SQL Server, and more.

Create Your Analytics

Use the point-and-click UI to build parameterized insights. Test and validate before any agent ever calls them. Configure caching and scheduling.

Deploy MCP Server

One click. Fully managed infrastructure with enterprise security, auto-scaling, and the latest MCP specification. No DevOps required.

Agents Start Querying

Internal agents get intelligence. External agents pay per query. Full lineage tracks every request. Your data never moves.

The Bottom Line

The agentic analytics era is here. Gartner, McKinsey, Bain, and every major cloud provider agree on this. AI agents will be the primary consumers of business intelligence within the next few years.

The question isn't whether your analytics need to be agent-accessible. The question is how.

You can bolt MCP onto an existing BI platform and hope that runtime SQL generation is accurate enough. You can build custom integrations and manage infrastructure yourself. Or you can treat analytics distribution the way it should be treated in an agentic world: as a set of curated, tested, parameterized, auditable objects served through a managed protocol with full lineage, available for both internal intelligence and external monetization.

That's what SparteraConnect does.

Connect your data platforms → Create parameterized analytics → Cache and schedule for performance → Distribute via managed MCP → Track full usage lineage → Monetize to third-party agents

Ready to Deploy?

Get started with SparteraConnect: managed MCP servers for your analytics.

Explore SparteraConnectSpartera is the Analytics as a Service platform that enables companies to create, distribute, and monetize analytics through managed MCP servers, without moving data. Starting at $100/month.